the aural encosion theory...

...proposes that each species has a unique auditory framework that limits its ability to fully understand the nuances of another species' vocalizations.

It suggests that if we could tune our hearing to match that of another species, like a dog, we might better appreciate the complexity of their communication.

Conversely, if dogs could be trained to interpret human vocalizations more accurately, it could lead to enhanced interspecies understanding.

The theory raises questions about the potential for bridging the gaps in communication between different species.

(Conceived in the late 90s)

Podcast deep-dive by NotebookLM

Precience of the past meets the technology of the future...

Using AI to Decode Animal Communication (2023) by Aza Raskin

Species-Specific Auditory Frameworks – Both theories recognize that each species has evolved a unique perceptual framework for communication. Raskin discusses how belugas communicate up to 150 kilohertz while humans can only hear up to 20 kilohertz - a perfect example of how one species cannot fully appreciate the nuance of another species' communication because their "ear" isn't tuned to it.

The Translation Problem – "If a dog laughed, would we be able to differentiate that from normal dog sounds?" ...Raskin's work directly addresses this through AI - attempting to decode and translate animal vocalizations that have always been there but invisible to human perception.

Expanding Perceptual Apertures – Raskin repeatedly emphasizes: "Our ability to understand is limited by our ability to perceive." This is essentially the aural encosion theory in action - he's using AI as a tool to "tune our ears" to perceive what was always being communicated.

Bidirectional Communication Gap – the aural encosion theory suggests that if human vocalizations all sound similar to dogs, perhaps dogs' vocalizations all sound similar to us. Raskin demonstrates this empirically - showing that 97% of beluga communication data is thrown away because researchers can't distinguish who's speaking or separate overlapping calls.

The Solution: "Retuning" – The aural encosion theory proposes helping species tune their hearing to appreciate each other's vocal range. Raskin's AI work is essentially creating that "retuning" technology - building models that can perceive and distinguish nuances in animal communication that humans cannot naturally detect.

The aural encosion theory explains why we can't understand; Raskin's project shows how we might be able to.

The aural encosion theory could actually serve as the philosophical foundation for understanding why projects like Earth Species are necessary - we're not just missing data, we're fundamentally "encosed" in our own species-specific perceptual limitations.

Theory in Practice: Project CETI...

Project CETI (Cetacean Translation Initiative) – The most comprehensive application of the aural encosion theory is currently happening in the waters off Dominica, where a collaboration between MIT, Harvard, Oxford, UC Berkeley, and Google Research is attempting to understand sperm whale communication for the first time in history.

The Perception Problem – Sperm whales communicate in ways humans cannot naturally perceive - rapid-fire click patterns at frequencies and speeds beyond our auditory processing. This is the aural encosion theory in its purest form: an entire language exists, but we're "encosed" in our human perceptual framework, unable to distinguish the nuances without technological assistance.

AI as the Universal Transcoder – CETI deploys hundreds of synchronized underwater microphones, aerial drones tracking whale positions from above, and swimming robots with on-board cameras - all feeding data into machine learning models. These AI systems can perceive and pattern-match what humans cannot: distinguishing individual whales in overlapping calls, detecting micro-variations in click sequences, correlating vocalizations with specific behaviors. The AI isn't limited by human hearing range or processing speed - it's the "retuning" mechanism the theory proposes.

Four-Phase Validation – CETI's methodology proves they're not just collecting data, they're testing understanding: Phase 1 captures the communication (raw acoustic data). Phase 2 decodes the structure (identifying phonemes, syntax, individual "voices"). Phase 3 links form to meaning (correlating vocalizations with observed behaviors - feeding, socializing, navigating). Phase 4 validates comprehension (synthesizing whale sounds and testing if whales respond appropriately - the ultimate proof of understanding).

Interdisciplinary Necessity – Understanding another species' communication cannot be solved by a single discipline. CETI combines marine biology (whale behavior), linguistics (language structure), computer science (pattern recognition), robotics (synchronized data capture), and oceanography (acoustic propagation). The aural encosion theory predicted this: breaking through species-specific perceptual barriers requires tools and perspectives beyond any single field.

The Historic Goal – CETI's stated mission is to "understand another species' communication for the first time in human history." This validates the core premise of the aural encosion theory: we've been surrounded by complex communication from other species all along, but our perceptual limitations have kept us "encosed" in ignorance. AI provides the first practical path to break through.

What Sarah is doing with Breathe Conservation and the One Ocean Swim complements this perfectly - she's working to eliminate ocean plastic so that species like sperm whales can survive long enough for us to finally understand them. Protecting the ocean isn't just environmental activism; it's preserving the very subjects of our first real attempt at interspecies communication.

Breaking the 2,000-year assumption...

Bird Linguistics Research (2024) by Professor Toshitaka Suzuki – the first person to provide conclusive evidence that birds use language to communicate

The Assumption Barrier – For over 2,000 years, since Aristotle, we've assumed that only humans are capable of complex speech. Animal sounds were thought to reflect emotion, not meaning. This assumption itself is a form of aural encosion - we've been "encosed" in the belief that language is uniquely human, preventing us from recognizing the linguistic complexity in other species' communication.

Words, Not Just Emotions – Suzuki's 20-year study of Japanese tits proved that birds use specific words (like "ja" for snake) that refer to objects in the world, not just emotional states. This directly challenges the encosion - we couldn't perceive their language because we assumed it didn't exist. The aural encosion theory suggests we're limited by our perceptual framework; Suzuki shows we're also limited by our assumptions about what constitutes language.

Mental Images and Meaning – Suzuki's experiments demonstrated that birds form mental images from words - when they hear "ja" (snake), they actively search for snake-like objects. This proves the words have referential meaning, not just emotional content. The aural encosion theory proposes that species can't fully appreciate each other's communication; Suzuki's work shows that once we break through our assumptions, we can begin to decode the meaning.

Grammar and Syntax – Perhaps most remarkably, Suzuki discovered that birds combine words with grammar - "pitsubi gigi" (alert and gather) works, but "gigi pitsubi" doesn't. This sentence structure is essential for meaning, just like "dog bites man" versus "man bites (hot) dog" in human language. The aural encosion theory suggests each species has a unique communication framework; Suzuki reveals that framework includes grammatical rules as complex as our own.

Cross-Species Understanding – Suzuki found that tree sparrows understand the tit birds' calls and use them as warnings, even though they're different species. This suggests that some species can "tune their ears" to understand others' communication - exactly what the aural encosion theory proposes might be possible. The sparrows have learned to decode the tits' language, breaking through their own species-specific limitations.

Curiosity Over Technology – Suzuki emphasizes that what's needed isn't AI or advanced technology, but rather "a curious mind and to really pay attention to the world around us." This aligns with the aural encosion theory's premise - the first step to understanding other species' communication is recognizing that we've been encosed in our assumptions. Once we pay attention, we can begin to perceive what was always there.

The aural encosion theory explains why we haven't recognized animal language; Suzuki's work proves that animal language exists and shows us how to begin understanding it - by questioning our 2,000-year-old assumptions and paying attention to what's actually being communicated.

Advancements beyond audio...

This "encosion" idea can be generalised: so not just audio, an entire biological filter stack.

- Temporal encosion –– different creatures sample time differently (e.g. higher "frame rate" perception). What we experience as "normal motion" may be as "slow cinema" to a fly.

- Spectral encosion –– different creatures access different parts of the electromagnetic spectrum (e.g. UV patterns on flowers that look plain to us).

- Chemical encosion –– ecosystems communicate with signals we don't natively sense (pheromones, volatile organic compounds, etc).

Could AI be the missing universal transcoder? Humans are biological: our sensory organs + brains filter what we perceive as the world.

AI isn't biological and doesn't have species-specific sensorium apart from what it has been trained on. It can ingest raw or transformed data (ultrasound, infrasound, UV-derived imagery, time-compressed signals) and model patterns, without needing those patterns to be "pleasant" or "natural" to a human.

AI isn't just a tool — it's plausibly the first practical mechanism for transcoding information between species.

What if we could build a system that translates or retunes signals into the human-perceptible domain (or creates hybrid, artistic renderings) without claiming we've achieved perfect "meaning."

Straw man proposal...

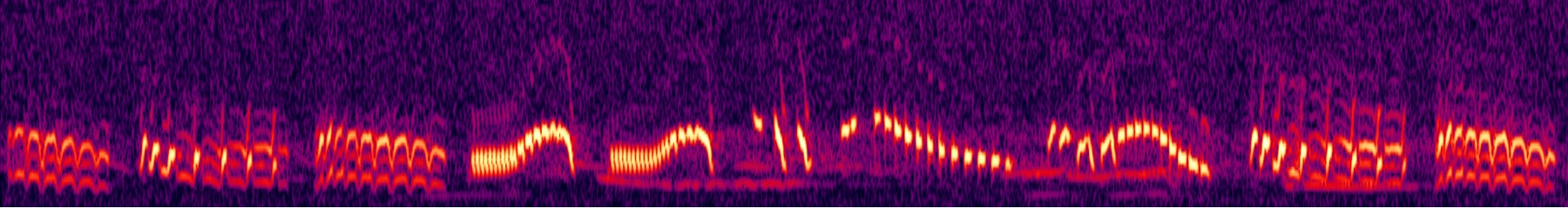

What if we 'retuned' bird speech into a more human-perceptible domain? A very rough demonstration of slowing down and altering pitch to a more human-like register:

Some other references...

Ted Chiang's "Story of Your Life" (1998) – In this science fiction novella, the protagonist, a linguist, attempts to decipher an alien language that is completely different from human language in its structure and perception of time.

Ludwig Wittgenstein's "Language Games" (1953) – The philosopher Wittgenstein proposed that language is inextricably tied to the context and "form of life" in which it is used. He suggested that understanding a language requires understanding the rules and contexts of its use, which may differ between communities or species.

Thomas Nagel's "What Is It Like to Be a Bat?" (1974) – In this philosophical essay, Nagel argues that the subjective experience of a bat's perception (primarily through echolocation) is fundamentally inaccessible to human understanding, as our own perceptual framework is so different. This idea parallels the challenges of understanding another species' auditory world.

Frans de Waal's "Are We Smart Enough to Know How Smart Animals Are?" (2017) – This non-fiction book explores the complexities of animal cognition and communication, emphasizing the need to study animals on their own terms rather than through a human-centric lens.

Douglas Adams' Babelfish – In "The Hitchhiker's Guide to the Galaxy," (1978) the Babelfish is a small, yellow creature that, when placed in one's ear, allows the user to instantly understand any language spoken to them. This concept is similar to the idea of tuning our hearing to understand the nuances of another species' communication.